This past weekend I updated to the new XO-1 firmware image following the directions at this website. The process was simple and painless. I believe this was the first significant update since Sugar was spun off from the OLPC project, and I had long given up hope of seeing any significant updates for the XO-1.

I can’t say that this adds any dramatic features. They do offer a Switch to GNOME option now, which may be useful sometime, but I don’t think I want to run Gimp or Gnumeric on this machine all that much.

What it does offer is a lot less hassle. First up, the machine seems to boot faster. That is nice. I prefer not to reboot though, so pardon me for not being thrilled.

Second, they now offer an “Advanced Power Management” option, that helps the battery life a good deal. Alas, it still doesn’t seem to want to happily sleep for days on end like Deb’s MacBook will, and it doesn’t even want to sleep as long as my Toshiba laptop, but still it is a lot better than no sleep mode. They now seem to have a sleep mode where the display is active, but the CPU sleeps after some time of inactivity. You can tell that it has done this because when you later hit a kit, the screen will jump and there will be about a second pause before the key stroke shows up. Way cool, but still I want it to manage power well enough to never have to power down then back up. I guess a hibernate mode is out of the question though.

Potentially an even bigger deal is that now it does a much better job of remembering my wireless settings. Before I’d have to re-choose after every reboot, and sometimes even between reboots, and I always had to re-enter the password, frequently even after it had just been sitting unused for awhile. Now, I haven’t had to re-choose the network once, and I haven’t had to re-enter the wireless password either.

Application wise, I don’t see any improvement in the web browser, the Activity (what they call a program) I use most. I do see a change in all activities where when you exit the activity you are now asked to make a journal entry. I don’t like this at all.

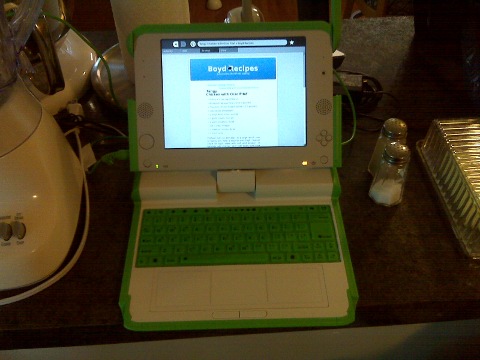

To be honest, I haven’t really looked at the education activities since the update since I mostly use this as a kitchen recipe machine (thus why the picture shows the XO-1 between my toaster and salt and pepper). I figure that when he is a bit older, I’ll give this to David.

Since I never actually wrote a review of this machine, allow me to insert here that the display is really great (but obviously a bit small). Also, I found that the keyboard is nearly unusable. I can use the keyboard on similarly sized 9″ netbooks (albeit more slowly than a regular keyboard), but on this membrane keyboard I end up resorting to two finger typing most of the time. Obviously this isn’t designed for me, but it does sap some of the motivation out of my hopes of actually working some on this machine (although I have still taken it around to use as a terminal).

One thing I’d really love to see in the future would be a webkit based browser. I think that it would be a much better fit for this low memory machine than the Gecko engine that is currently used. Another thing I really want is an email program. They take the view that kids don’t need email or can use webmail, but I’d like to see a decent email sugar activity. Another nice one could be a calendar and address book activity. These probably aren’t important for most places, but I think that for American Sugar users (presumably running on non-OLPC hardware) this would be handy. Better still would be social networking support for these programs as well as twitter and facebook clients. If such features were to be added, there probably should also be some system for parents to set limits on how various pieces are used.

I keep wanting to find time to setup a Sugar environment and actually work on writing some activities. A twitter client, while frivolous, may be an excellent first activity. BTW, I’m @jdboyd. I need to add a sidebar link at some point.